Portend: Advancing Outcomes of Machine Learning Models for Mission Success

Created November 2025

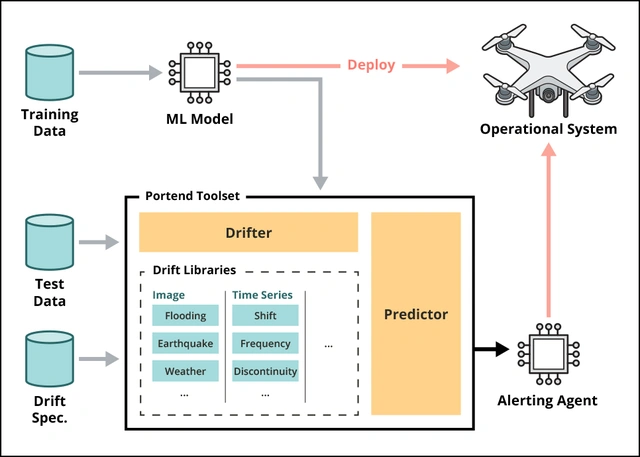

To increase trust in systems that depend on machine learning (ML), the Software Engineering Institute (SEI) developed Portend, an open source toolset that increases model success by enabling model developers to (1) synthesize a monitor for detecting out-of-distribution (OOD) data, (2) deploy that monitor in a live system to detect and flag unexpected inputs, (3) guide the ML model to take alternative actions when unexpected inputs threaten the model’s performance, and (4) know when to conduct model retraining.

Assuring the performance of ML models will be increasingly essential to the Department of War’s (DoW’s) mission, because these models—which find patterns and make predictions based on patterns learned from training datasets—are powerful, but they can also be brittle. This fragility often appears when ML models are fed data that’s outside the ML models’ effective domain, meaning the data is unexpected or dissimilar to the data on which the models were trained. When unexpected data is received, ML models can fail, often silently and without warning. Through the Portend toolset, the SEI seeks to address this issue and increase model success, which can make the difference between a mission failing or succeeding.

Learn More

Drifting off the Digital Cliff

ML models generally work well when receiving inputs that are similar to the inputs on which the models were trained. However, if there are changes in type or scope of data (an occurrence known as drift), the ML models often have a sudden and dramatic performance drop, which is colloquially known as the “digital cliff effect.” In many cases, ML models cannot detect when they’ve gone off the digital cliff and will go on producing incorrect output, which makes it difficult for developers to know when the models need to be retrained.

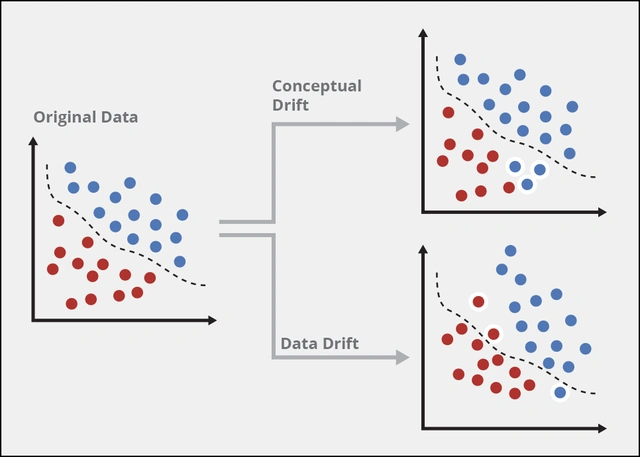

Two different types of drift can occur. The first type is concept drift, which happens when labels to the relevant data have changed. For example, if an ML model is used to distinguish between cars and trucks, concept drift occurs when model users change the definition of trucks. The second type is data drift, which occurs when the features of the items that are being classified change. If the features of trucks change, the model needs to be retrained to accommodate this shift.

While ML models may be able to withstand a certain amount of drift without issue, safeguards need to be created. Specifically, model developers need to be alerted when drift has occurred or is likely to occur. This knowledge allows model developers to appropriately schedule model retraining. Because retraining requires resources and time, it can be a needless expense if done when it isn’t required. Knowing when an ML model has or is likely to drift off the digital cliff allows model developers to better maintain the ML model and ultimately, to better achieve mission success.

Creating Guardrails Against Drift

To address these challenges, the SEI developed Portend, an end-to-end solution that simulates a model’s drift, sets drift thresholds, and alerts system users when the model exceeds them. Portend has two main tools: the drifter and the predictor. To simulate drift, the drifter generates a drifted dataset from a base dataset and a drift function. The predictor runs a drift-detection experiment and generates predicted metrics and thresholds for analysis.

Together, the drifter and predictor enable users to easily configure their model for different scenarios in multiple data modalities (e.g., images, time series, and audio). As a flexible toolset, Portend supports the addition of new ML models and complex algorithms, custom datasets, drift functions, and drift-detection metrics. The toolset also includes a library of drift generation functions for a variety of drift conditions.

Overall, Portend allows model developers to plan for drift, understand model behavior in response to drift, forecast model maintenance needs, communicate monitoring requirements to model integrators and operators, and provide alerts that are operationally relevant.

Looking Ahead

As the Portend project continues, the SEI will design and implement automatic threshold generation for monitors, integrate new library and monitor/alert detection to the Portend toolset, and customize features of the Portend toolset to meet the needs of current and future clients.

Learn More

Portend Toolset Creates Guardrails for Machine Learning Data Drift

•Newsletter

This SEI Bulletin newsletter was published on January 8, 2025.

ReadPortend: Drift Planning in ML Systems

•Fact Sheet

The SEI’s Portend tool can help you learn whether machine-learning models are functioning as intended.

Learn MoreEnhancing Machine Learning Assurance with Portend

•Blog Post

This post introduces Portend, a new open source toolset that simulates data drift in machine learning models and identifies the proper metrics to detect drift in production environments.

READ